Things we learned while creating Google Assistant voice apps

In this blog post Christiaan shares his insights and tips in developing voice apps for Google Assistant using Dialogflow.

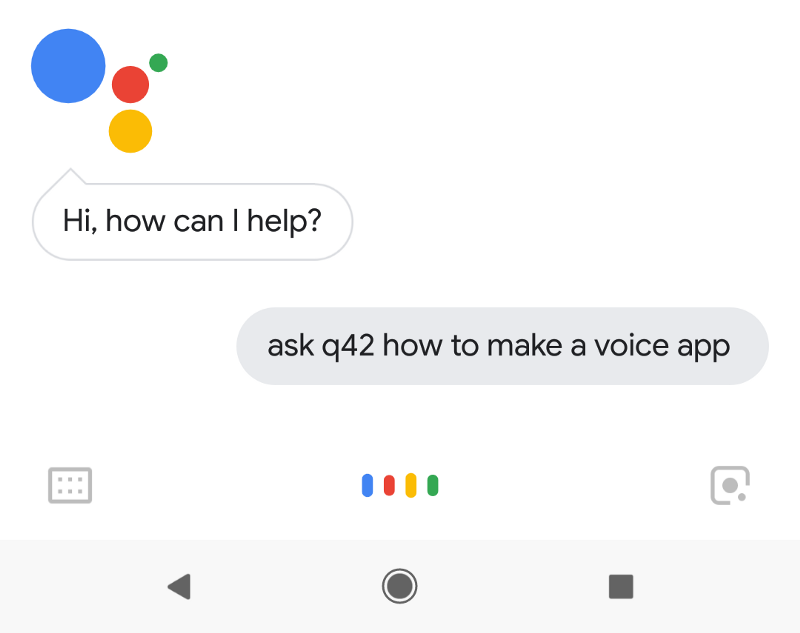

Ok Google, ask Q42 how to make a voice app

Okay. Let’s get Q42.

Hi! This is Christiaan from Q42. Q42 is a strategic technology agency from the Netherlands and we recently made several voice apps. We learned a lot along the way and I can help you with your technical questions. What would you like to know?

What do you need to make a voice app?

We use Actions on Google with Dialogflow and Cloud Functions for Firebase for the fulfillment webhooks.

What does Dialogflow actually do?

Dialogflow handles the Natural Language processing. When a user says “How is the weather in Amsterdam?” Actions on Google translates this into a string, and then gives it to Dialogflow. Dialogflow then parses that string and recognizes that the user wants weather info and that Amsterdam is a city. It then makes a fulfillment call to a weather API to figure out what the actual weather is.

Tell me more I WANT TO EAT PANCAKES!

Sorry, I don’t understand.

Doh, kids are talking. Can you tell me more about the fulfillment?

Ah yes, when Dialogflow gets a request and needs to make an API call to an external service to look up some extra info it uses a fulfillment webhook. In our case it makes a call to our Google Cloud Function which talks to our backend systems and then returns the info to Dialogflow. The Cloud Functions run on Node.js and we use Typescript to write them.

Typescript? Why?

Because it makes writing Javascript much nicer. The Actions on Google Client Library (version 2) is also written in Typescript itself, so the integration is really nice. When you’re working in Visual Studio Code you can just type new LinkOutSuggestion( and autocomplete will tell you you need a name and url parameter. Also, since Cloud Functions still run on Node 6, you normally can’t use nice features like async/await, but with Typescript you can and it will just compile it down to old Node code.

How did you setup your project?

Since we work with multiple developers on the same project this gets a bit tricky. The Dialogflow UI doesn’t seem to handle multiple users editing in the same project very well, so we created a separate project for each developer. We also have a staging project where we deploy to when we have something working. We also have a production project which runs the stable code.

Each project has its own Dialogflow, Actions on Google and Firebase project.

How do you get the content from one Dialogflow project into another?

After one developer changes some intents and content in Dialogflow we use our own dftool to export it to our local disk. We can then commit it to Git. Another developer can then merge it with their own changes and use dftool again to import it to the staging or production environment. This workflow also allows us to roll back to previous versions of our Dialogflow content in case we accidentally break something.

Do you also use that for local development?

Yes, during development we expose our local function through ngrok and tell Dialogflow to use it for the fulfillment. For example:

npm run dftool import my-project ../dialogflow https://6d5a5etc.ngrok.io/my-project/us-central1/MyFunctionFrom that moment on, all fulfillment calls in my-project will use that local server. This makes development much faster than if you’d have to deploy your function every time you made a small change.

I heard something about Dialogflow Enterprise Edition. Do I need that?

When you expect more than 1000 requests per day you’re gonna need the Enterprise Edition. The tricky part is that you can’t switch your project from Standard to Enterprise, so if you expect you might hit that limit at some point, you’ll want to start out with the Enterprise Edition right from the start.

In our case we created an Enterprise project for production and Standard projects for our testing and development projects.

Do you have any other wisdom to share?

Sure! I can tell you about NVM, authentication, SSML and machine learning. What would you like to know?

nvm

If you’re working on modern Node.js projects you probably have Node 8 or 9 installed. For Google Cloud Functions you still need Node 6, so we use Node Version Manager to switch Node versions to whatever we need. This also made the recent update from Node 6.11.5 to 6.14.0 easy.

What about authentication?

You can use Account Linking to authenticate users so you can return personalized results. However, that doesn’t work in the simulator. The simulator is great for debugging since you can see all the requests and responses and error messages. We noticed that if you log in first on your phone, the accessToken of your account is automatically transferred to the simulator which can then use it to make authenticated calls.

Another good trick to know is that if you want to log out you should click “Disable testing on device” and then re-enable it again.

What did you learn about SSML?

SSML allows you to define how to pronounce your text. For example:

The thing you must realize is that this must all be valid XML. This won’t work for example:

The reason is that ‘&’ is a special character in XML, so you need to escape it:

It will save you a lot of headache if you escape properly from the beginning of your project.

I heard Machine Learning is hot! What do you know about it?

Dialogflow automagically uses Machine Learning to match natural language with known intents. Most of the time this works really nice since users can say “What’s the weather?” or “How’s the weather?” and it will both get matched to the Weather intent. However, sometimes it matches something completely unexpected. In the Training screen of Dialogflow you can see how it interprets things people say and if it makes mistakes you can correct Dialogflow manually. So far we haven’t figured out why certain things don’t match well and the solution seems to be mostly a matter of trial and error and lots of testing. We expect this to get better in the future.

What’s the website again of that Dialogflow tool you made?

The website is:

https://github.com/Q42/dftool

Is there anything else I can do for you?

Can you update the title of this article?

Sorry, I don’t understand.

Put an actual number in the title

Could you rephrase that?

Update title

I’m afraid I can’t help you with that.

Q42 left the conversation

Want to know more about our work with Google Assistant? Leave a comment below.